We've gone through many iterations of ways to build, deploy and distribute applications written in Go at Cloud 66. Unlike Rails, Go applications can be web applications, daemons or CLIs and therefore have different requirements. I'll share some of what we've learned with you in this post.

Setup

We use Google Cloud Build to build our Go apps. In our experience, GCP Cloud Build has the best developer experience and is the most convenient one to use, especially if you're running other things on GCP. Other products we tried were Buildkite, which is as powerful as a fully configurable workflow-based bash script running on a cluster of machines, but is almost always overkill for something like this, and Github Actions, which has without exception been a disappointment with various levels of frustration.

For web applications and daemons written in Go, we always use Docker images as a distribution method since, ultimately, we will be running them in a Docker container. For CLIs written in Go, we build them using Google Cloud Build but distribute them in their executable form for different platforms.

Building Go web apps and daemons (services)

Google Cloud Build uses a manifest file called cloudbuild.yaml or cloudbuild.json for its builds. To build a Go app, you'd need a cloudbuild file and a Dockerfile:

Dockerfile

Here is an example of a Dockerfile for a service called agent:

This build uses a 2 step build: a first step using Go binaries and a second step using a small image (Alpine in this case) to run the compiled executable.

Note the CGO_ENABLED=0 before build: this makes the compiled executable work in Alpine. If you plan to use an OS other than Alpine for your final image or need CGO in your Go code, you can drop it.

As a convention for our Go apps, we always include the git SHA of the revision used to build the code in the executable. You can do this by including some code like this in your Go app:

Now you can set Commit during the build step:

Note the SHORT_SHA argument (we will pass this into the build process later) and the ldflags params in build, including the package name (utils in this case).

cloudbuild.json

Now let's turn our attention to the cloudbuild.json file. I always prefer JSON to YAML because I don't like overthinking about indentations in YAML. However, if you have large Cloud Build files, you might benefit from YAML since it supports comments.

In this file, we're defining two steps:

The first step uses Kaniko to build the Docker image using our Dockerfile. We use Kaniko on GCP Cloud Build because it takes care of the build without the need for a Docker daemon and automatically pushes the built image to the GCP Container Registry. In this step, we are using the short SHA git ref of the commit used for the build as the image tag. At this point we pass in SHORT_SHA which is automatically populated by GCP into the build process so it can be picked up by the ARG command in the Dockerfile.

The second step is an optional one: it retags the image we just built with the latest tag.

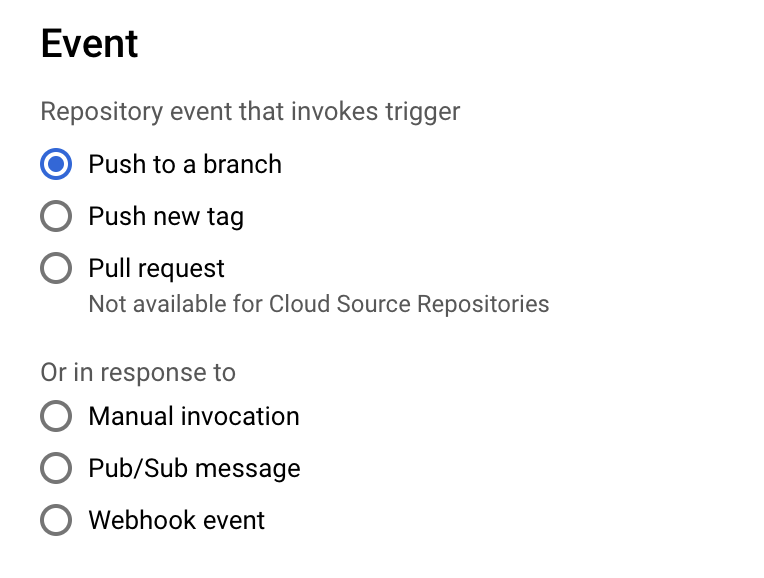

Cloud Build Setup (triggers)

All that's left now is to set up a trigger in GCP Cloud Build to build our image after every commit. For this we usually create a trigger that starts the build on every commit to the main or master branch. Head to GCP Cloud Build, create a new trigger, connect your GCP to your Github account, and select your code repo.

Bonus Step (deployment to Kubernetes)

If you use GKE clusters to run your applications you can deploy them to your cluster directly from here. Just add a deployment step to your cloudbuild.json:

For this, you'd need to have a deployment.yml file that contains your Kubernetes deployment manifest. You will also need to ensure your GCP build service account has the correct permissions to access your GKE cluster. This document includes some information on adding automatic deployments to your Cloud Build: https://cloud.google.com/build/docs/deploying-builds/deploy-gke

Building Go CLI apps

Building a CLI app written in Go is very similar to building other Go applications. The difference is usually in its distribution: CLI tools are distributed as binaries and need to be built for all platforms they support, not just Linux.

Cross-Platform Builds

For CLI tools, we don't use Dockerfiles in the repository and use GCP Cloud Build builder images. Let's take a look at a cloudbuild.yml that we use for CLI tools (this one written in YAML):

This Cloud build file has three steps:

Steps 1 and 2, build the tool for Mac and Linux (you can add more steps for other platforms like Windows or ARM by changing GOARCH and GOOS environment variables for cross-compiling.

Optional Step: Generate version files

The last step is optional, and we use it to let CLI tools automatically update themselves if a newer version is available. For this, we use a simple setup:

- A

version.jsonfile that contains the latest version number of the tool. - Some simple Go code to download

version.jsonand compare the latest version with the executable's version.

Since we are building the code on GCP, we can use GCP Cloud Storage buckets to store both the binaries and the version.json files. You can have this bucket publicly available or restrict public access if your CLI tool is internal or private.

As you've already noticed, the last step of the build runs a bash file called replace.sh

This simple bash file takes a single argument for the version and generates a version.json for it. Here is the version.template.json file:

Now that we have the latest version in the JSON file, we can upload the binaries and the version.json to the GCP Storage using the artefacts element of cloudbuild.yml seen at the end of the file above.

The Go code that downloads version.json and the binaries can use httputils if your bucket is accessible publicly, or the cloud.google.com/go/storage library if it is private.